At the beginning of 2025, the AI field witnessed a significant transformation – the open-source release of DeepSeek R1. Thanks to its low cost and outstanding performance, this model garnered unprecedented attention within just a few weeks. Due to frequent server congestion on the official website, users began opting for tools like Ollam+OpenWebUI and LM Studio for rapid local deployment, thus bringing AI capabilities to enterprise intranets and personal PC environments.

Recently, Tencent's Zhuque Lab discovered that these popular AI tools commonly contain security vulnerabilities. If used improperly, attackers could steal user data, abuse computing resources, or even control user devices.

This text will discuss the security issues of these popular AI tools and how to use the open-source AI-Infra-Guard to detect and mitigate related risks with a single click.

I. Ollam

Ollama is an open-source application that allows users to locally deploy and operate large language models (LLMs) on Windows, Linux, and macOS devices. Inspired by Docker, Ollama simplifies the process of packaging and deploying AI models and has now become the most popular solution for running large models on personal computers. Most articles on the internet about local deployment of DeepSeek R1 also recommend this tool.

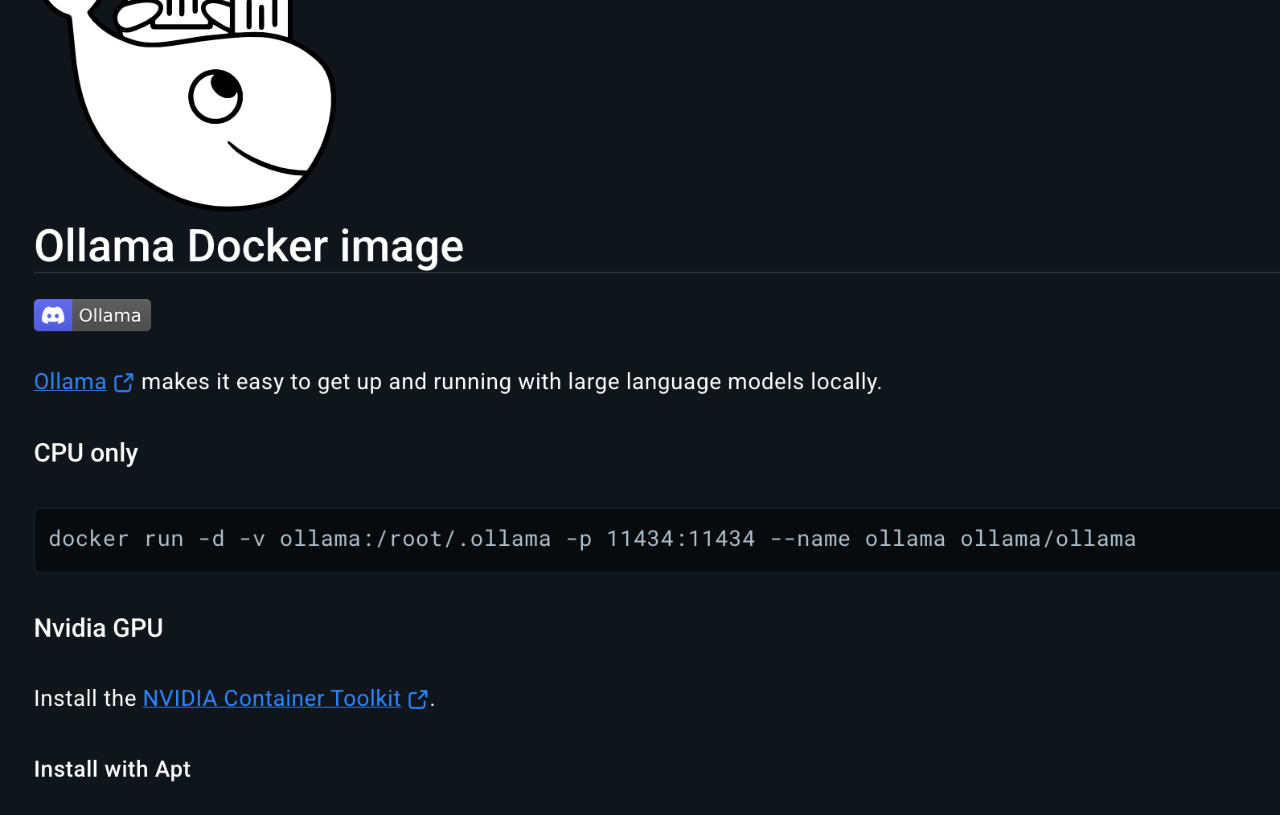

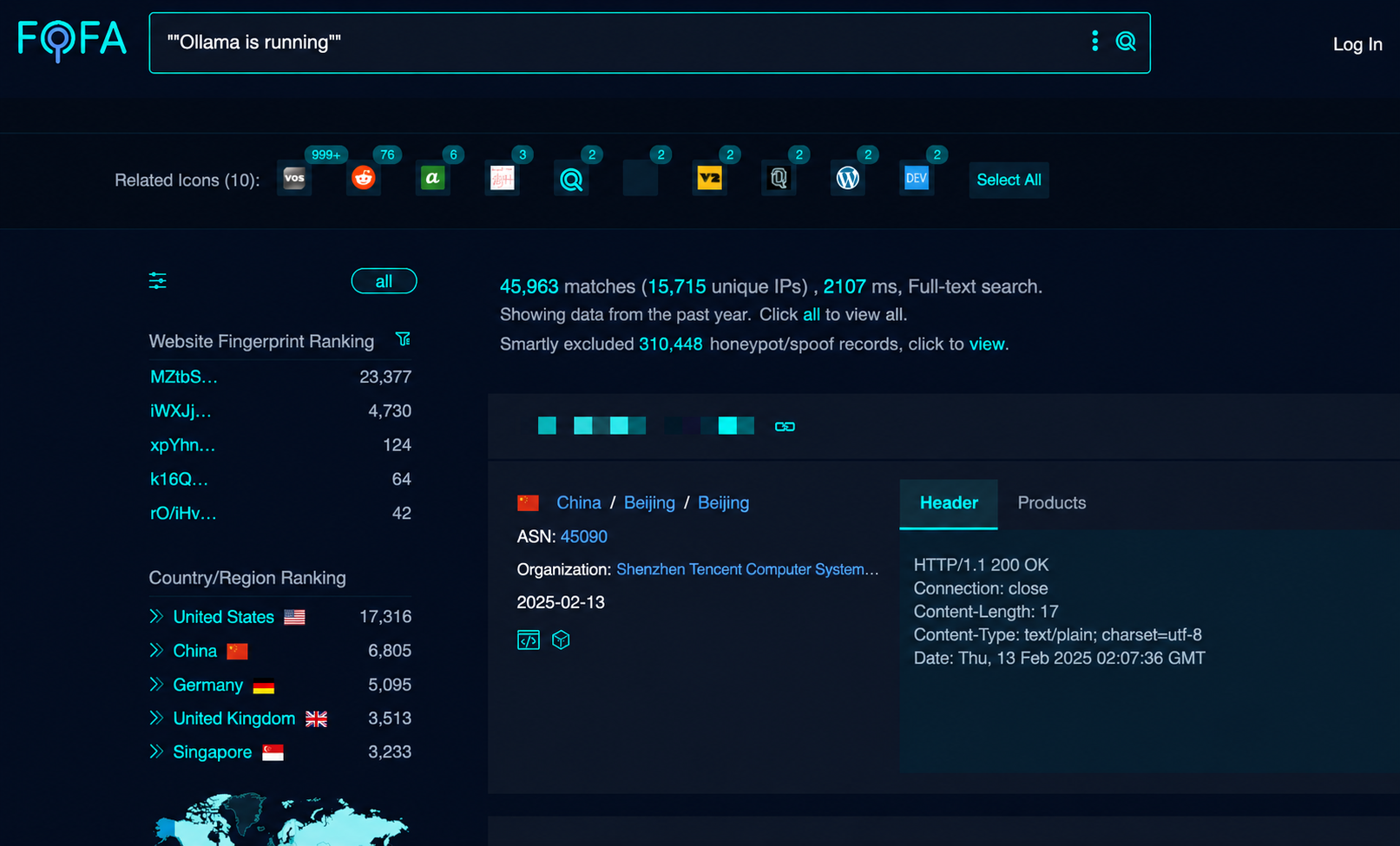

By default, Ollama opens port 11434 upon startup, exposing its core functionality via a RESTful API, such as downloading and uploading models, and enabling model-to-model interactions. While Ollama typically only exposes this port locally, its Docker container will start with root privileges and expose it to the public internet by default.

Ollama generally lacks authentication for these interfaces, allowing attackers to perform a range of attacks after scanning these open Ollama services.

1) Model deletion

For example, deleting a model via an interface.

2) Model theft

View the ollama model through the interface.

Ollama supports custom mirror sources. By setting up your own mirror server and then using an interface, you can easily steal private model files.

3) Computing power theft

By viewing the ollama model through the interface, one can then use requests to steal the target machine's computing power.

4) Poisoning the model

You can view the running models through the interface, then download the toxic model, delete the normal model, and then migrate the toxic model to the normal model path through the interface, thus polluting the user's conversation with the toxic model.

5) Remote command execution vulnerability CVE-2024-37032

Ollama experienced a serious remote command execution vulnerability [CVE-2024-37032] last June. This vulnerability, a critical path traversal flaw in the Ollama open-source framework, allows remote code execution (RCE) and has a CVSSv3 score of 9.1. It affects versions of Ollama prior to 0.1.34, allowing arbitrary file read/write and remote code execution by forging a manifest file using a self-built mirror.

relief plan

Upgrade to the latest version of ollama, but ollama currently does not offer any official authentication solutions. When running ollama serve, ensure that the environment variable OLLAMA_HOST is set to your local address to avoid running it on the public internet. It is recommended to run ollama locally and then use a reverse proxy tool (such as Nginx) to add access protection to the server.

According to statistics, there are still about 40,000 unprotected Ollam services on the public internet. Please check if your deployment is secure.

II. OpenWebUI

OpenWebUI is currently the most popular web UI for large-scale chat applications, encompassing features such as large-scale chat, image upload, and RAG functionality, and it integrates easily with Ollama. It's also a common companion for local deployments of DeepSeek. However, OpenWebUI has a history of vulnerabilities; a few typical examples are selected here.

【CVE-2024-6707】A single file can hack your AI

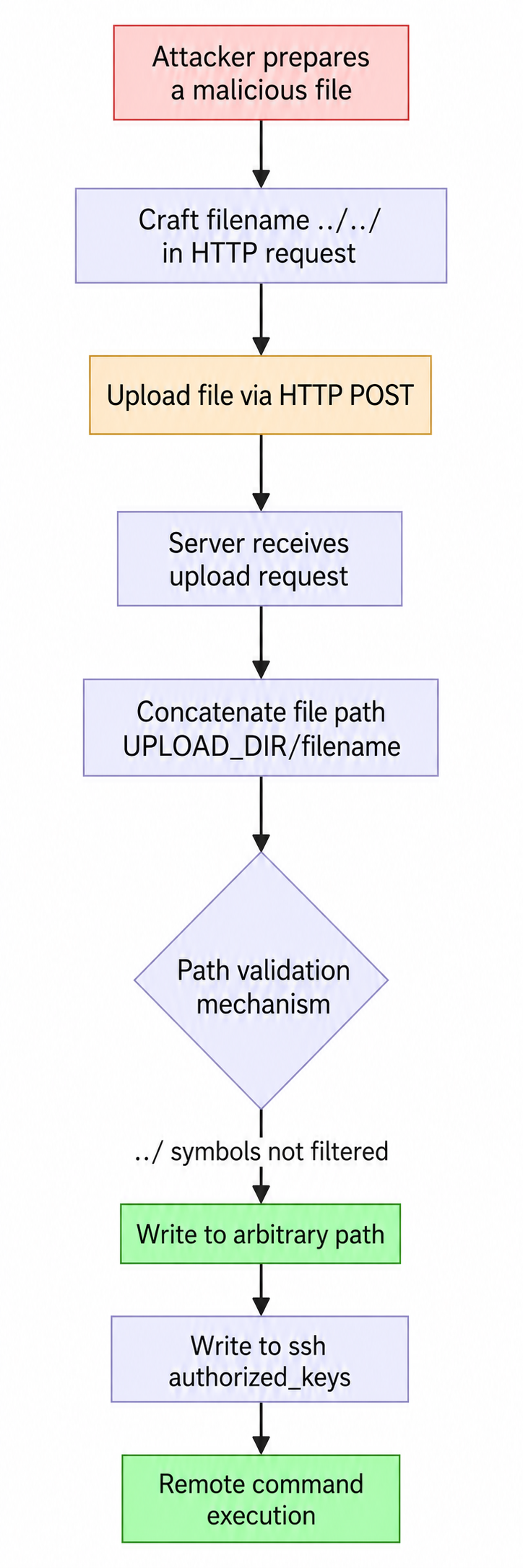

When a user uploads a file by clicking the plus sign (+) to the left of the message input box through the Open WebUI HTTP interface, the file is stored in a static upload directory. The uploaded filename can be forged and is not validated, allowing attackers to upload files to any directory by constructing filenames containing path traversal characters (such as ../../).

Attackers can execute arbitrary code by uploading malicious models (such as files containing Python serialized objects), deserializing them, or by uploading authorized_keys to achieve remote command execution.

The flowchart is as follows:

relief plan

Upgrade to the latest version to avoid enabling the user system.

III. ComfyUI

ComfyUI is currently the most popular diffusion model application, known for its rich plugin ecosystem and highly customizable nodes, and is often used in areas such as text-to-image and text-to-video conversion.

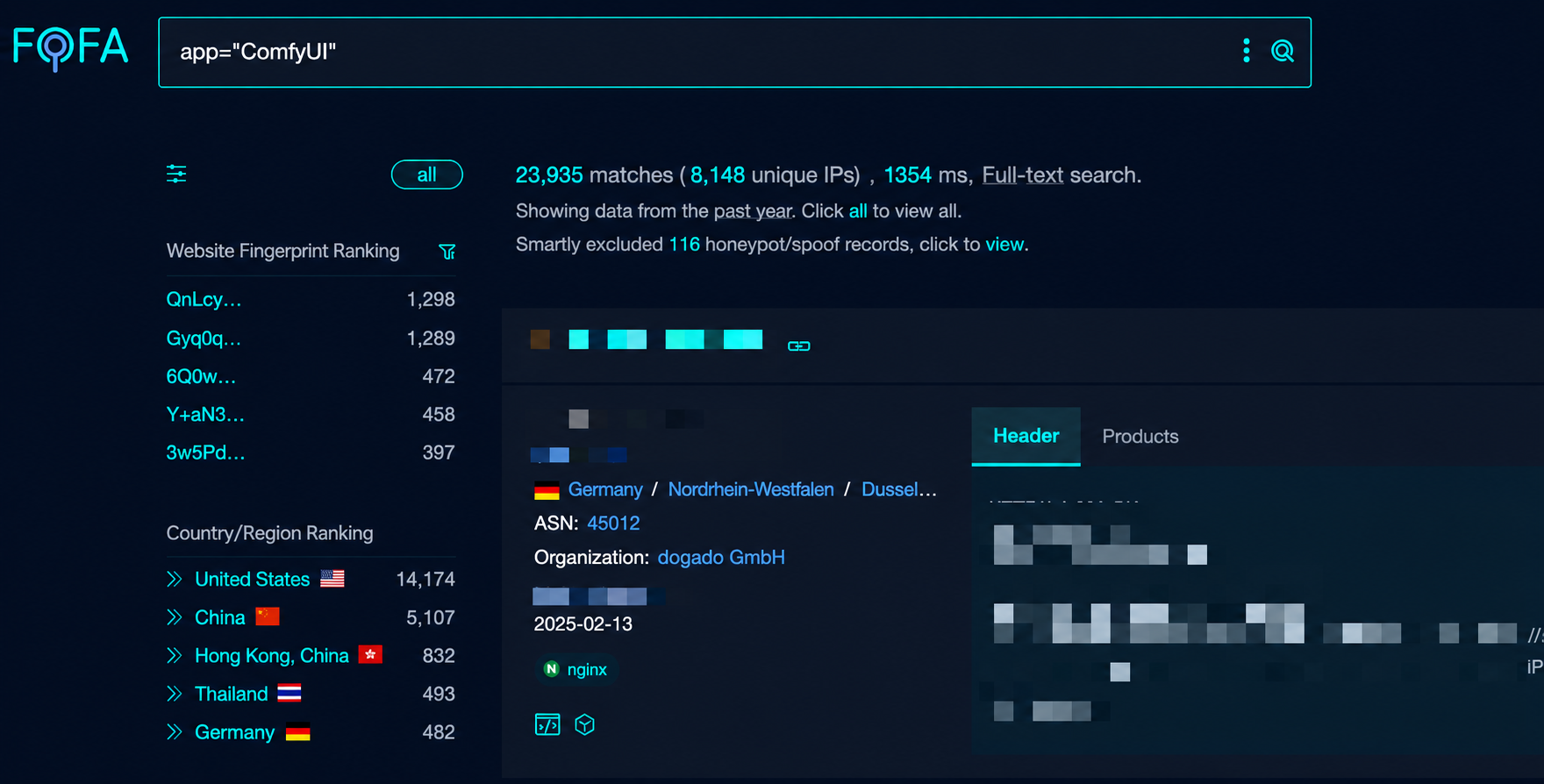

Like Ollama, developers may initially only want to use ComfyUI locally without any authentication methods, but there are also many ComfyUI applications that are open to the public internet.

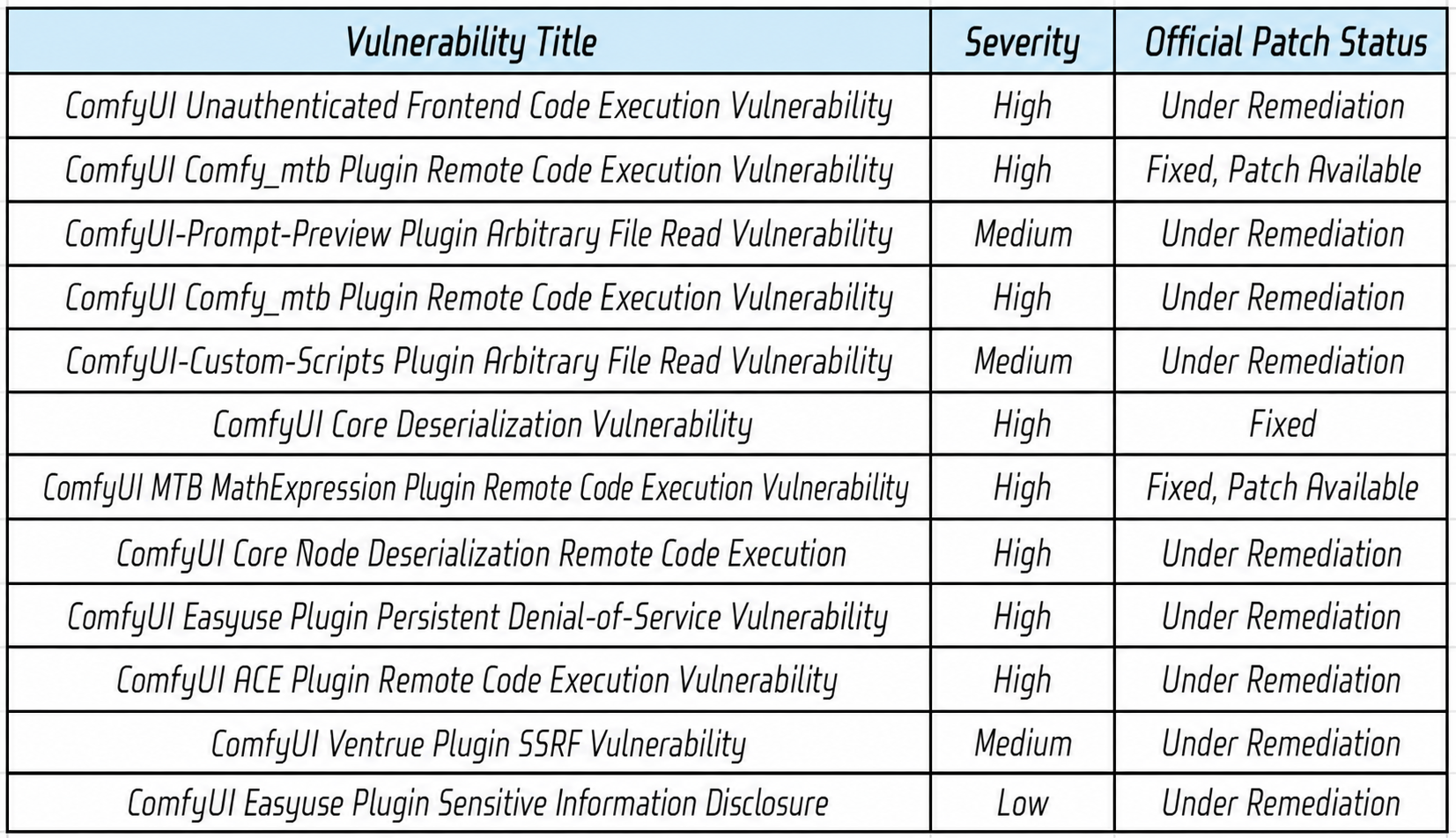

ComfyUI is known for its plugin ecosystem, but plugin authors are generally individual developers who don't pay much attention to security. Tencent's Zhuque Lab discovered several vulnerabilities in ComfyUI and its plugins last year.

Zhuque Lab's past vulnerability discoveries include:

Most of the vulnerabilities mentioned above affect the core code of the entire ComfyUI series (including the latest version) and some popular plugins, impacting remote command execution, arbitrary file reading/writing, data theft, and other vulnerabilities.

relief plan

Due to the slow pace of vulnerability patching, the latest version of ComfyUI still has vulnerabilities, and it is not recommended to expose it to the public network for use.

IV. AI-Infra-Guard: One-click detection and prevention of AI risks

Over the past year, the Zhuque Blue Team has conducted in-depth research and practice on the security of large-scale mixed-data models, gradually implementing a set of large-scale model software supply chain security solutions. This project possesses the ability to discover AI security threats in a lightweight, rapid, and harmless manner. Utilizing large-scale models for vulnerability collection, it has helped close several blind spots regarding the risk of "open-source software supply chain vulnerabilities leading to mixed-data leakage," validating the application potential of leveraging large-scale models to empower security.

A typical scenario:

Security team: "Please, please enable authentication for Ollama first!"

Algorithm team: "But the documentation doesn't mention needing security configuration..."

Operations team: "I've never heard of this framework before, how do I scan?"

It is precisely these pain points that gave birth to AI-Infra-Guard.

What is AI-Infra-Guard?

AI Infra Guard (AI Infrastructure Guard) is a powerful, lightweight, and easy-to-use security assessment tool for AI infrastructure, designed specifically to discover and detect potential security risks in AI systems. It currently supports the detection of 30 AI components, including not only common AI applications like Dify, ComfyUI, and OpenWebUI, but also development and training frameworks such as Ragflow, LangChain, and Llama-factory.

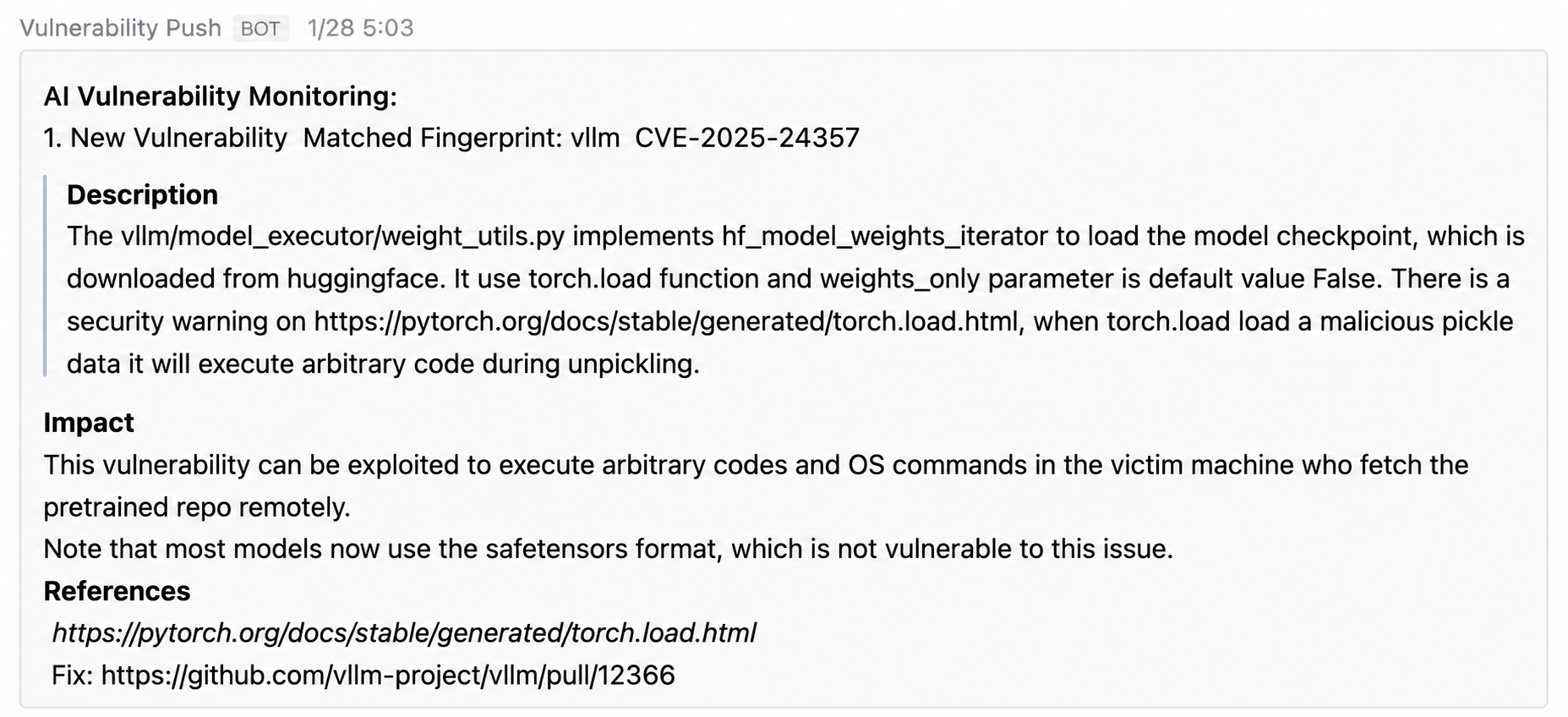

1) Automatically accumulate vulnerability rules through large models

To address the high cost of manual analysis of CVE vulnerability rules for massive amounts of AI components, we implemented a solution that automatically collects historical vulnerabilities using a large model. Traditionally, this might require manual analysis of CVE descriptions and writing regular expression matching rules (taking 3 hours per vulnerability). Now, using the Hunyuan large model, automatic synchronization of CVEs and automatic parsing by the large model -> generation of vulnerability detection logic takes only 30 seconds. Real-time monitoring of vulnerabilities related to AI components has also been achieved.

2) Use friendly

• Zero dependencies, ready to use out of the box, binary file only 8MB

• Memory usage < 50MB, no lag after scanning a cluster of 1,000 nodes.

• Cross-platform compatibility, supporting Windows/MacOS/Linux

use

Al-Infra-Guard is open source on GitHub and currently includes fingerprints of 30+ AI applications and a database of 200+ security vulnerabilities. It also includes vulnerabilities in well-known AI components such as NVIDIA Triton, PyTorch, ComfyUI, and Ray, which were exclusively discovered by Tencent Zhuque Lab.

For individual users who want to test their local AI component applications, the following command can be executed for a one-click test../ai-infra-guard -localscan

This will check and identify open ports on the local machine and provide security recommendations.

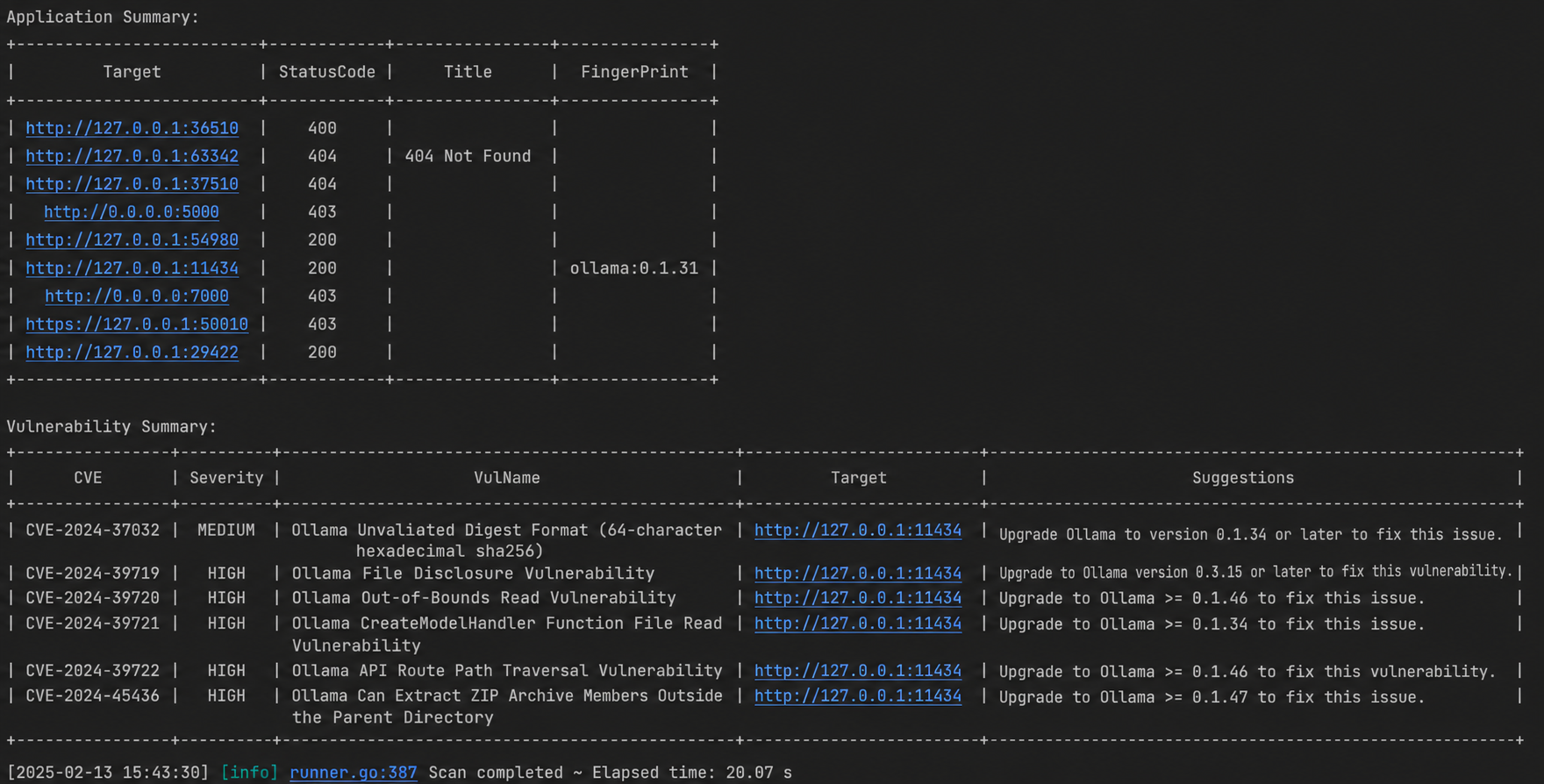

If the Ollama version containing the vulnerability was used above, the one-click test will display the following message:

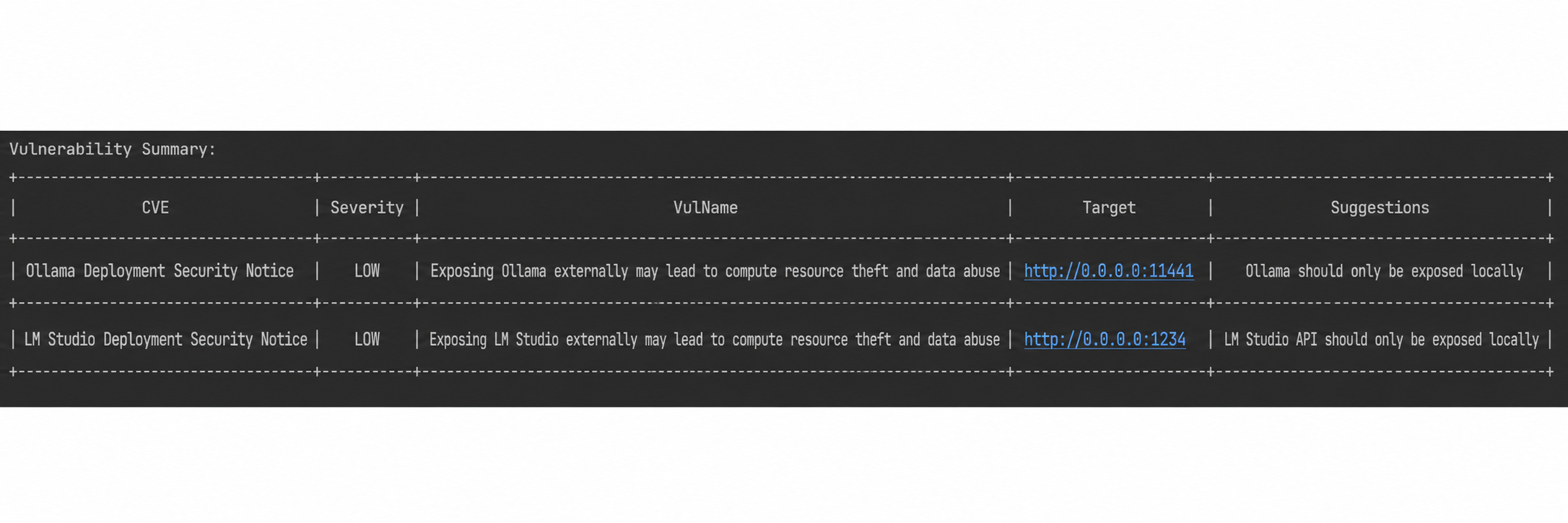

If the system detects that the AI service is publicly available on the internet, a notification will also be displayed.

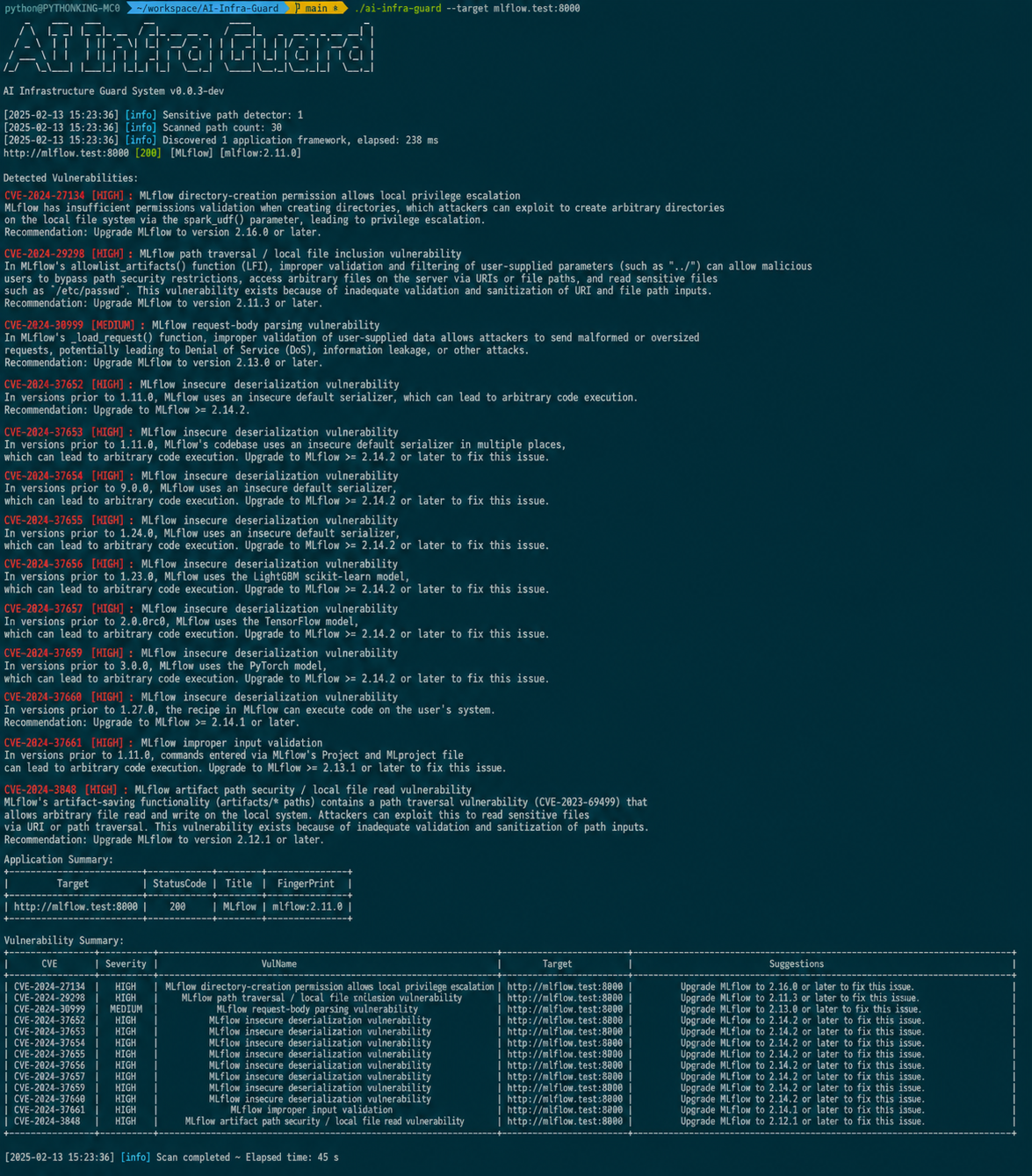

For developers/operations staff who want to test the security of deployed AI services, execute the command.

./ai-infra-guard -target [IP:PORT/Domain] Multi target ./ai-infra-guard -target [IP:PORT/Domain] -target [IP:PORT/Domain]# 扫描网段寻找AI服务 ./ai-infra-guard -target 192.168.1.0/24# 从文件读取目标扫描./ai-infra-guard -file target.txt

Get address

Open source address: https://github.com/Tencent/AI-Infra-Guard/

Download address (download the version corresponding to your system): https://github.com/Tencent/AI-Infra-Guard/releases

We welcome everyone to star, try out, and provide feedback on any issues with the tool!

Tencent Zhuque Lab, established in 2019 by Tencent's Security Platform Department, is a top-tier AI security lab focused on practical attack and defense and cutting-edge technology research in the field of AI security. Its research areas cover large-model security, AI agent security, AI-enabled security, and AI generation detection. The team has repeatedly assisted well-known companies such as NVIDIA, Google, and Microsoft, as well as open-source communities like OpenClaw, Linux, and Huggingface, in fixing numerous high-risk vulnerabilities, receiving official public acknowledgments. It has launched several AI security products, including the open-source AI red team security testing platform AIG (AI-Infra-Guard) and the Zhuque AI Detection Assistant. Research findings have been widely published at top international security and AI academic conferences such as Black Hat, DEF CON, ICLR, CVPR, NeurIPS, and ACL, and the monograph "AI Security: Technology and Practice" has been published.