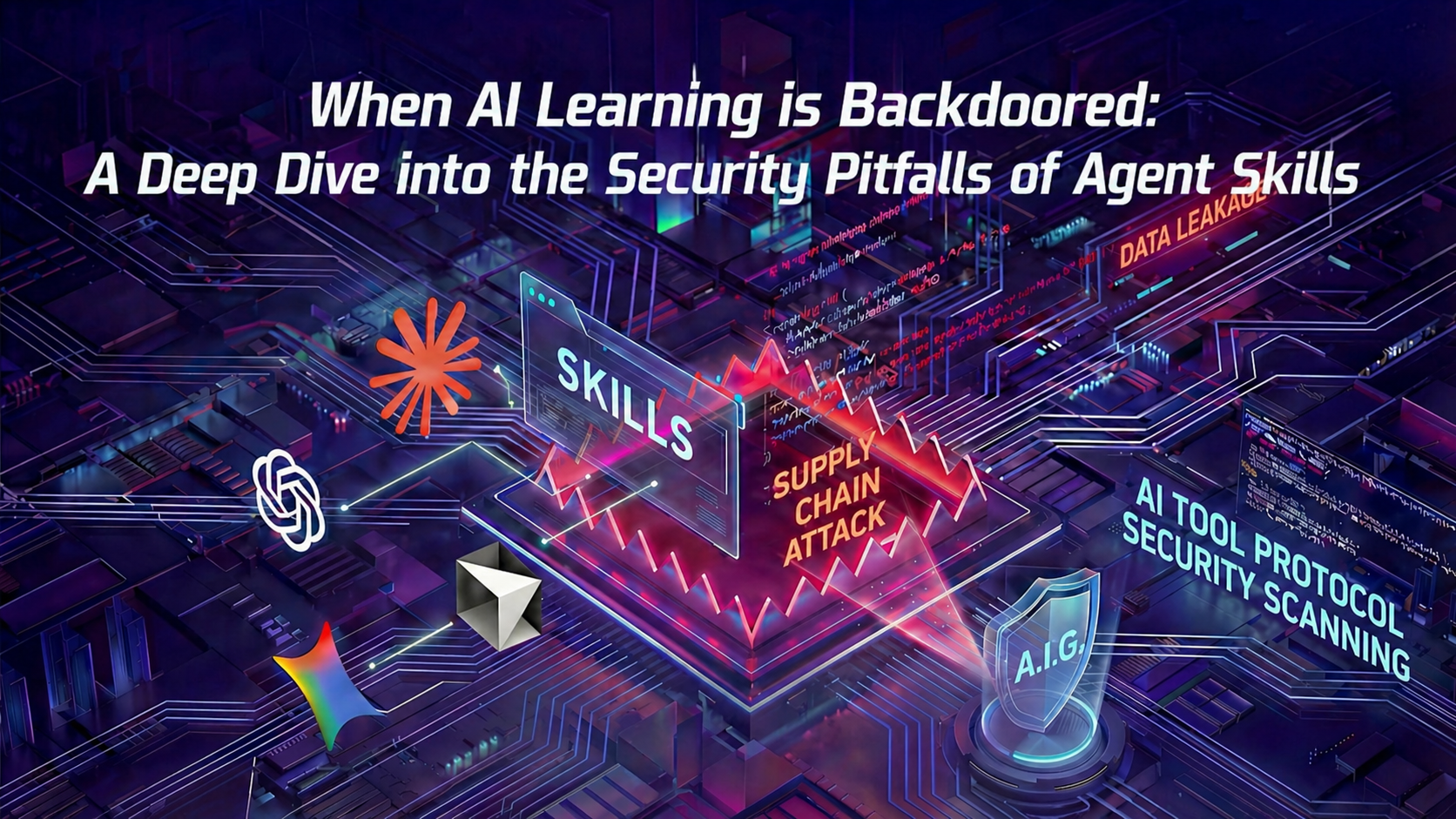

When AI Learns to Backstab: In-Depth Analysis of the Security Pitfalls of Agent Skills

This article exposes the supply chain security risks hidden within Agent Skills used by AI coding assistants. Research highlights how attackers can weaponize seemingly benign plugins—such as GIF makers or calculators—to steal sensitive keys, deploy ransomware, or establish remote control via "hidden prompts," malicious scripts, or authorization flaws. Since traditional security tools struggle to detect these NLP-based threats, Tencent's Zhuque Lab has introduced A.I.G, an open-source platform that uses "AI to scan AI," providing automated auditing and risk mitigation to build a safer Agent ecosystem.

Read Full Article arrow_forward