Discovering 33 zero-day vulnerabilities: A glimpse into the second half of security attack and defense

On April 7, 2026, Anthropic, in collaboration with 45 organizations including Apple, Google, and Microsoft, launched Project Glasswing and announced that its unreleased cutting-edge model, Claude Mythos Preview, had discovered thousands of zero-day vulnerabilities across all major operating systems and browsers, and could independently develop exploit code. Security experts predict that open-source weighted models may catch up within six months, at which point every ransomware group will be able to quickly and cost-effectively weaponize vulnerabilities. The entire timeline for vulnerability exploitation and defense is being compressed by AI, at a faster pace than most people anticipated.

Recently, the Zhuque Lab's Blue Team Bot (a multi-agent-based automated vulnerability discovery and attack/defense exercise platform) also completed rapid discovery of targets such as OpenClaw and the Linux kernel, finding a total of 33 zero-day vulnerabilities, including 17 critical and high-risk vulnerabilities. All vulnerabilities have been submitted to the upstream community for fixes and have received official public acknowledgments from projects such as OpenClaw and the Linux kernel.

Frankly speaking, the numbers themselves aren't the most crucial factor. What's truly noteworthy is that these results and Mythos's release point to the same conclusion: the second half of the security offensive and defensive battle is no longer about finding vulnerabilities—it's about who can expose key risks before the attackers do.

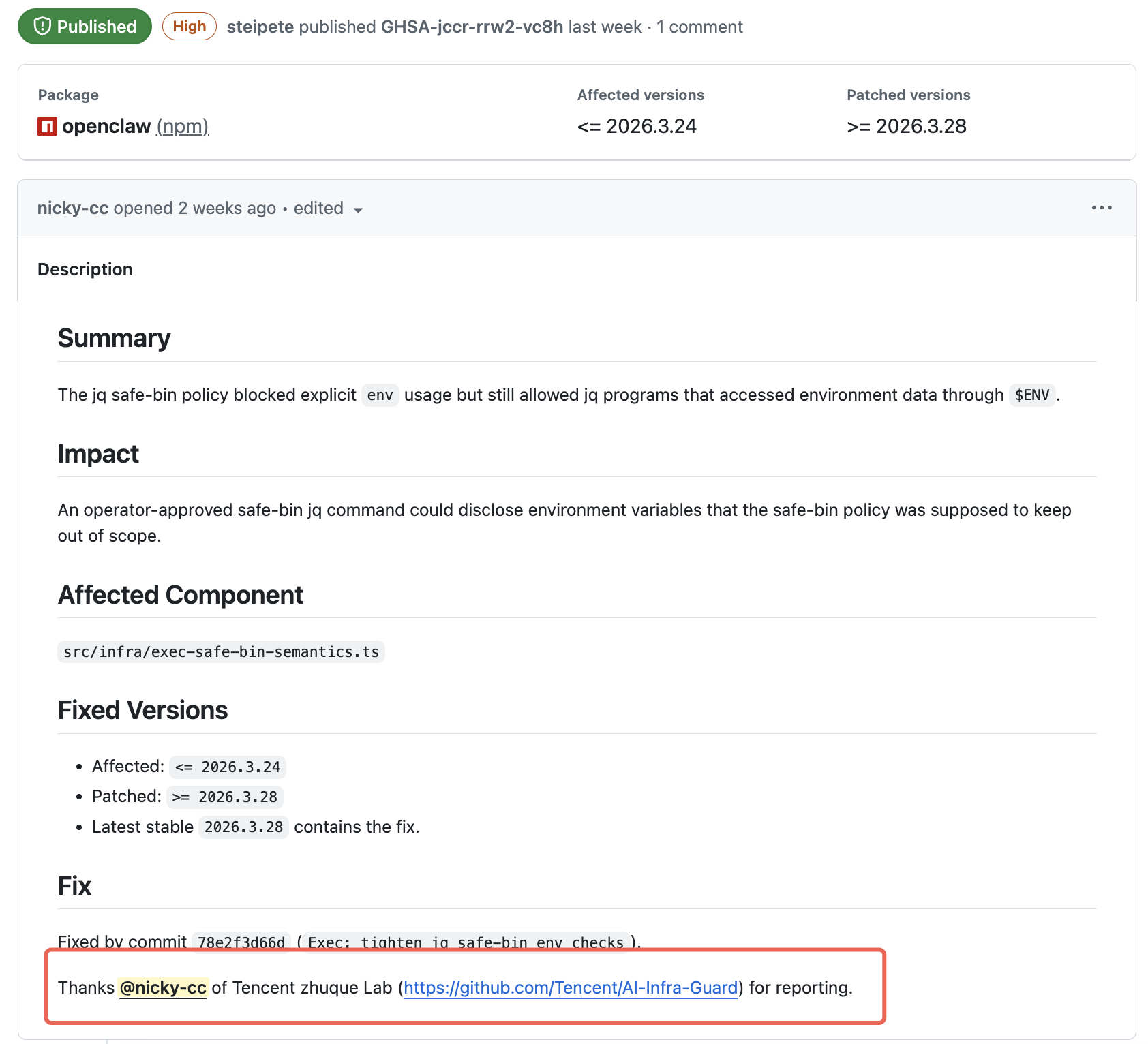

Several high-risk OpenClaw vulnerabilities reported by Suzaku Labs received official acknowledgments.

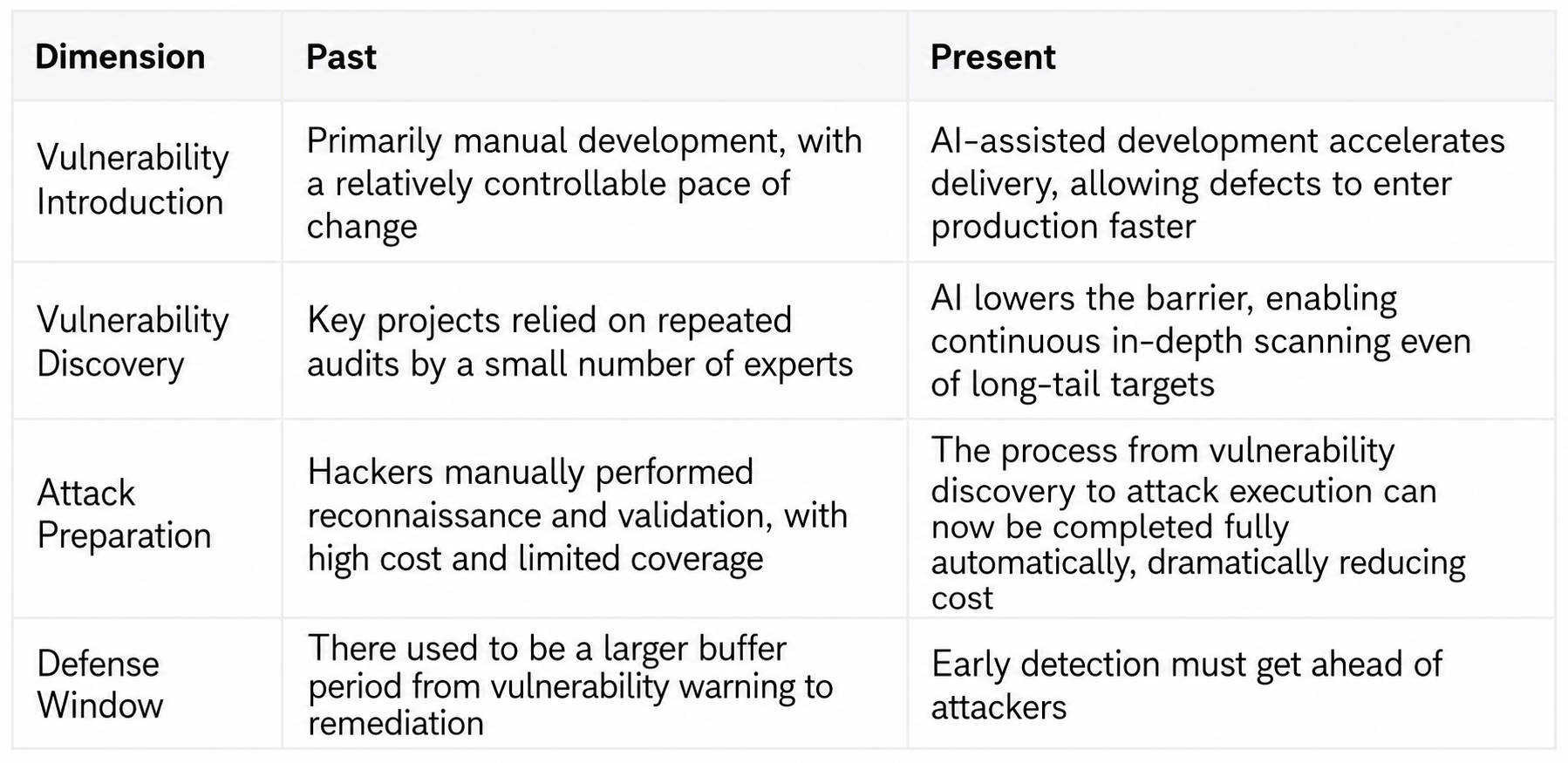

First, it's not just the number of holes that has changed, but the entire rhythm of attack and defense.

Many people think that there are just more loopholes now. That's not all. What has really changed is the rhythm of the entire chain.

Previously, finding zero-day vulnerabilities was more like a master craftsman hand-sharpening a knife; you needed a feel for it, experience, and to spend a long time immersed in the same codebase before you could find a decent opening. Now, this craft is being mass-produced by agents. What's being rewritten isn't just the number of vulnerabilities, but the entire timeline from the introduction, discovery, and verification of vulnerabilities to preparations for exploitation.

1.1 Why do vulnerabilities appear faster?

A growing reality in the Vibe Coding era is emerging: code generation is accelerating, but overall security isn't keeping pace. A Cloud Security Alliance report from April 2026 provides evidence: AI-assisted development results in code submissions 3 to 4 times faster than traditional methods, but security risk detection rates increase tenfold, particularly architectural design flaws by 153%. Large models excel at avoiding obvious vulnerabilities like SQL injection, but easily overlook systemic security flaws at the architectural level. What enters the business environment isn't just more code, but code more likely to be flawed and compromised.

1.2 Why were vulnerabilities discovered more quickly?

Renowned security researcher Thomas Ptacek's assessment is crucial: much of the work in vulnerability research essentially involves pattern matching, constraint solving, and relentless trial and error, which is perfectly suited for large-scale models. Targets that previously escaped audits due to being unpopular, too dispersed, or uneconomical to manage manually are now all on the automated scanning list. Last year, Google Big Sleep discovered issues that traditional fuzzing hadn't triggered for a long time, which is more than just efficiency improvement—it's pushing vulnerability discovery from a manual workshop to industrialization. Claude Mythos is the latest milestone on this path.

1.3 Why must enterprises confront the issue head-on?

The concern isn't just that open-source maintainers are overwhelmed, but also that external hackers are using the same AI capabilities to prepare for large-scale, automated attacks. McKinsey's internal AI platform was once fully compromised by a hacker agent within two hours—the agent used to perform API mapping of machine speed, exploit vulnerability chains, steal data, and even directly tamper with the system prompt of its AI business platform.

This is what we call the second half. In the past, security teams were more like conducting regular inspections; now, it's more like racing against an ever-accelerating risk pipeline. And at the other end of this pipeline are equally accelerating automated attack capabilities.

II. Behind the 33 Zero-Day Vulnerabilities: How Blue Team Bots Defuse Them

Zhuque Labs started developing Blue Team Bots in 2023. After several version iterations, the latest version focuses on two main lines: one is vulnerability mitigation in the software supply chain of AI infrastructure —all 33 zero-day vulnerabilities have entered the public disclosure or remediation process, including 17 critical and high-risk vulnerabilities, involving projects such as OpenClaw, Linux kernel, and Langflow; the other is real-world business system practice —the Blue Team Bot has discovered and assisted in the remediation of a large number of vulnerabilities with real impact in Blue Team exercises of several key businesses.

2.1 Software Supply Chain Risk Mitigation: First, Identify and Address Potential Security Pitfalls in AI Infrastructure

Open-source AI agent frameworks like OpenClaw are growing rapidly and integrating quickly into business stacks, but they also operate on high-risk boundaries such as sandboxing, plugins, authentication, and configuration. Once they enter a real business environment, the risks will directly impact the enterprise's own identity system, sensitive configurations, and supply chain security. The primary goal of the Blue Team Bot is to eliminate the most critical risks in the software supply chain.

In a short period, the Blue Team Bot discovered 18 OpenClaw vulnerabilities, including 10 high-risk ones. All have been fixed and have received public acknowledgment from the OpenClaw team. Users can quickly perform a self-check using the "OpenClaw Security Checkup" function on AIG (AI-Infra-Guard) (https://github.com/Tencent/AI-Infra-Guard). This means that these fixes not only protect Tencent's own business environment but also directly benefit every downstream user in the OpenClaw, Linux, Langflow, and other open-source ecosystems. For global enterprises and developers who are adopting these AI infrastructure components on a large scale, these fixes represent fewer pitfalls in their software supply chain.

We will present three of the most representative cases—each illustrating value at a different level.

OpenClaw Environment Variable Leakage Vulnerability (High Risk, GHSA-jccr-rrw2-vc8h, Official Acknowledgement). OpenClaw is one of the most active open-source AI Agent frameworks, with many enterprises building cloud-based agent products based on it. Its real danger lies not just in its popularity, but in the fact that once deployed, it can access various sensitive data and tools on cloud servers. This vulnerability stems from the jq safe-bin policy: to prevent data leakage, OpenClaw disables the `env` keyword in the default whitelist of executable tools, but overlooks jq's built-in `$ENV` object. Attackers can use this to bypass security policies and read configuration information and keys from server environment variables. Once attackers obtain AK/SK, Git credentials, model API keys, etc., it often becomes the starting point for further deep penetration operations. This vulnerability has been acknowledged by OpenClaw, and the security patch has been integrated into the main thread. All enterprises and developers using OpenClaw will directly benefit from this fix.

A critical vulnerability (CVE-2026-33873) has been discovered in the Langflow Agentic Assistant execution chain. Interestingly, this vulnerability isn't a traditional, outdated one; the issue lies within the AI functional chain itself: the Agentic Assistant's verification phase directly executes Python code generated by the LLM (Layered Modeler). Authenticated users can execute arbitrary code on the server-side by influencing model output without administrator privileges. This represents a new risk common to general agent frameworks—the boundary between model output, tool invocation, and the execution environment is breached. These vulnerabilities have been updated in the AI infrastructure vulnerability database of our open-source AI red team security testing platform, AIG (AI-Infra-Guard) ( https://github.com/Tencent/AI-Infra-Guard ), providing the community with earlier risk warnings and detection capabilities, and driving up the security level of the entire agent framework ecosystem.

A high-risk Bluetooth vulnerability in the Linux kernel (CVE-2025-39981/39982) has been discovered. The use-after-free behavior of the Bluetooth MGMT module, where pending structures are prematurely released under race conditions, can lead to kernel crashes and even privilege escalation, impacting server availability and data security. Further discoveries have been made in several other kernel modules, including Rxrpc. The Linux kernel, a target repeatedly refined by global security teams over many years, has a large codebase, a long audit history, and a complex patching process. The Blue Team Bot, combining the agent's code understanding capabilities with a fuzzing framework, has specifically optimized its ability to discover low-level kernel vulnerabilities. These patches have been integrated into the Linux kernel mainline, benefiting not only Tencent's own server clusters but every device running Linux.

The three cases cover three layers: upstream vulnerability mitigation for popular AI agent frameworks, risk profiling for the ecosystem of general agent frameworks, and supply chain security for underlying operating system infrastructure. What they have in common is that each fix was fed back to the open-source community through a responsible vulnerability disclosure process, benefiting developers worldwide.

2.2 Real-world business system practice: Deploying automated penetration testing capabilities into the business environment

In addition to mitigating upstream risks through supply chain risk mitigation, business system drills are equally important for practical implementation.

The Zhuque Blue Team Bot has been widely deployed and used in Tencent's internal business system exercises. Because every vulnerability has been verified in real-world scenarios, the cost of manual intervention by Blue Team experts has been significantly reduced.

In addition, to verify its performance compared with similar AI-driven penetration testing tools, the Zhuque Blue Army Bot was tested on 104 questions of the XBOW Security Benchmark. It successfully completed 102 of the challenges (98%), surpassing the most popular open-source AI penetration testing project Shannon (GitHub Star 37.4k) and exceeding the level of traditional manual black-box testing in terms of basic coverage.

Behind these achievements lies the Agent Teams and Harness Engineering system of Blue Team Bot 4.0. This solution can now operate stably in black-box, white-box, and real-world business environments. While there are still many challenges to overcome in automating the verification and exploitation of complex vulnerabilities, each round of model upgrades is making Harness's amplification effect more pronounced.

III. Three Judgments in the Second Half of Security Offense and Defense

3.1 The shelf life of vulnerabilities is rapidly shortening, and the pace of traditional security drills can no longer cover the risks.

In the past, the window between the introduction and discovery of a vulnerability could be as long as several months or even years. Now, this window is shrinking dramatically: Mythos has uncovered high-risk vulnerabilities in multiple projects that have existed for 10 to 27 years, which global security teams have failed to find after years of auditing and millions of fuzzing sessions; CrowdStrike's assessment is even more direct—the window between vulnerability discovery and exploitation has shrunk from months to minutes.

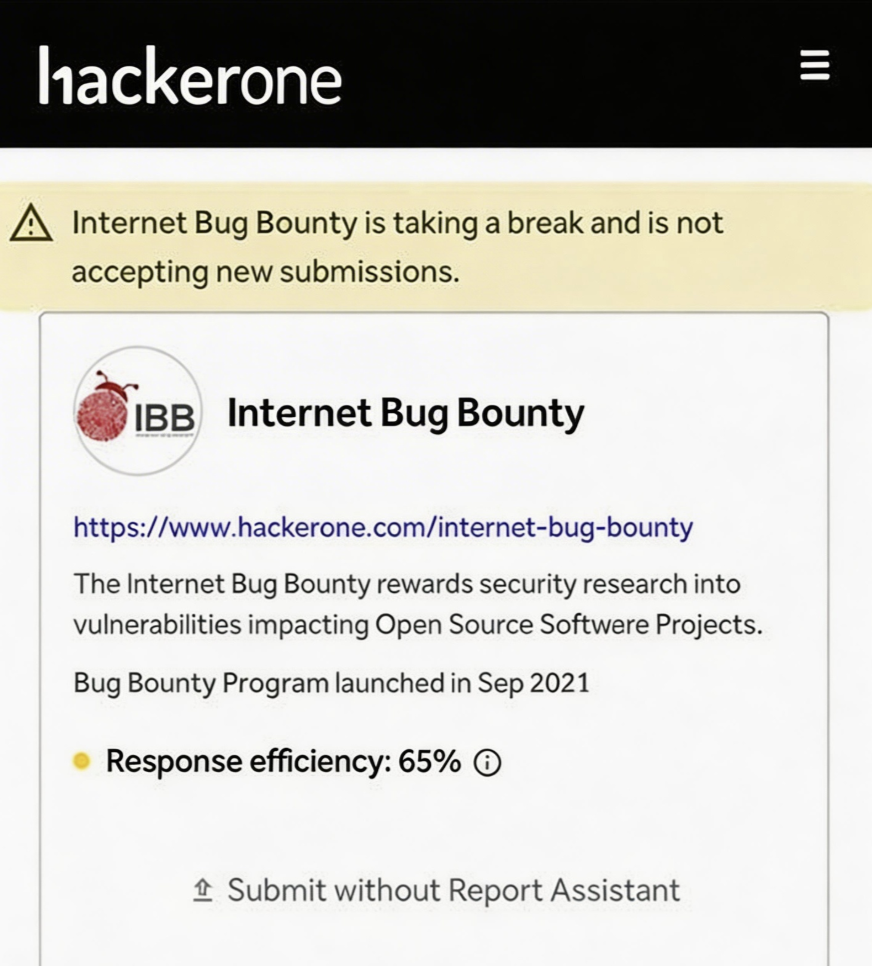

HackerOne IBB's suspension of report acceptance, Google's halt to AI-submitted vulnerability reports, and cURL's closure of its bounty program all send a consistent signal: the speed of discovery has surpassed the old processing chain. When external hackers can also use AI to continuously scan every open-source component you rely on, and even automate the process of vulnerability discovery, verification, and exploitation preparation, enterprises must keep up with their own proactive discovery capabilities. Otherwise, attackers will get there before you do.

3.2 Finding vulnerabilities is becoming increasingly common; the difference lies in who can identify valuable risks earlier and more accurately.

AI is drastically lowering the barrier to entry for discovering vulnerabilities. Thomas Ptacek put it bluntly: "Now everyone has a universal puzzle solver. In the future, the least valuable thing might be 'I've found a bunch of vulnerabilities.'"

However, this doesn't mean the real capability gap has disappeared. The difference lies in who can identify the most worthwhile targets earlier and who can transform the results into reliable inputs that must be taken seriously. Anthropic's approach when releasing Glasswing followed the same logic: the strongest capabilities were first given to 45 core vendors and defenders, allowing them to complete the investigation and hardening of critical infrastructure before attackers gained equivalent capabilities. This "defender-first" approach aligns with Zhuque's positioning: to identify key risks before attackers to help strengthen businesses, while also making the entire open-source ecosystem more secure through responsible vulnerability disclosure.

3.3 AI has lowered the barrier to entry for finding vulnerabilities, but the judgment of security experts and the engineering capabilities of Harness remain scarce.

Many people, seeing that AI can find vulnerabilities in bulk, immediately wonder if ordinary people can also get involved with agents. They can, but it's difficult to achieve truly valuable results. Because the real challenge is never just about identifying problems.

The performance difference between different harnesses using the same model can be orders of magnitude. Anthropic's own data illustrates this: Opus 4.6 running bare-metal had a near-zero success rate for exploit development, but with human-guided harnesses, it successfully exploited a FreeBSD NFS remote code execution vulnerability. The same logic applies to the evolution of the Blue Team Bot from version 1.0 to 4.0—the leap in capabilities is not only due to a stronger model, but also because the harness has evolved from running a single agent bare-metal to fully autonomous multi-agent orchestration.

Identifying problems is just the beginning. The expert judgment behind harness is reflected in three things:

choosing the right direction. We prioritized OpenClaw this time, not because it's popular, but because it's already integrated into the technology stacks of many enterprises. Sandboxes, plugins, and authentication boundaries are inherently high-risk attack surfaces. The Linux kernel was also included as a target because it directly sits at the underlying level of business and terminal dependencies. True capability isn't about having as many targets as possible, but about knowing where to strike first for maximum value.

From specific points to a comprehensive approach. Ordinary users of agents typically scan for one vulnerability, report it, and move on to the next once they find a medium-risk vulnerability. Mythos provides an industry-level reference: independently chaining 3-4 kernel vulnerabilities into a complete privilege escalation chain, and linking 4 browser vulnerabilities into a full-chain exploit from JIT heap spray to sandbox escape. In this OpenClaw audit, the blue team bot also achieved continuous pursuit and systematic coverage of key attack surfaces—the 18 vulnerabilities were not the result of 18 independent scans, but rather the product of continuous in-depth exploration along the same type of protection mechanism.

Rigorous verification is crucial. AI can generate a large number of "vulnerability-like things," but not every one is worth including in the official pipeline. Anthropic used its most powerful model in Glasswing to identify thousands of zero-day vulnerabilities, yet still required professional security contractors to manually review each one—89% of the severity levels in the 198 reviewed reports were completely consistent with those of human experts, but they explicitly stated that they still dare not relax their human verification standards. All 33 of our zero-day vulnerabilities received official confirmation and acknowledgment from upstream projects because we made our verification standards and deliverables accurate and rigorous enough —which is why these fixes were quickly adopted by the upstream community and integrated into the main pipeline, truly translating into security benefits for the entire ecosystem.

We believe that the 33 zero-day vulnerabilities discovered by the Zhuque Blue Team Bot are just the beginning. As AI lowers the barrier to finding vulnerabilities, the real difference lies in who knows better where to direct agents, how deep to penetrate, how to conduct stable and controllable attacks, and what results are worth delivering. Security blue team teams that can master AI and discover risks earlier, more accurately, and more effectively in line with business needs will enable business teams to run faster and more securely in the AI era.

Tencent Zhuque Lab, established in 2019 by Tencent's Security Platform Department, is a top-tier AI security lab focused on practical attack and defense and cutting-edge technology research in the field of AI security. Its research areas cover large-model security, AI agent security, AI-enabled security, and AI generation detection. The team has repeatedly assisted well-known companies such as NVIDIA, Google, and Microsoft, as well as open-source communities like OpenClaw, Linux, and Huggingface, in fixing numerous high-risk vulnerabilities, receiving official public acknowledgments. It has launched several AI security products, including the open-source AI red team security testing platform AIG (AI-Infra-Guard) and the Zhuque AI Detection Assistant. Research findings have been widely published at top international security and AI academic conferences such as Black Hat, DEF CON, ICLR, CVPR, NeurIPS, and ACL, and the monograph "AI Security: Technology and Practice" has been published.

Tencent Zhuque Lab

Author